Weed recognition technologies: development and opportunity for Australian grain production

Weed recognition technologies: development and opportunity for Australian grain production

Author: Michael Walsh, Asher Bender and Guy Coleman (University of Sydney) | Date: 10 Aug 2021

Take home messages

- Visual spectrum weed images can be used to develop highly accurate weed recognition algorithms

- The ready availability of low-cost digital camera and processor technologies has created the opportunity for superior weed recognition capability

- Accuracy of recognition algorithms continues to improve, increasing the opportunity for precise weed detection and identification in Australian cropping systems

- Currently there is a lack of suitably collected and annotated weed image datasets that encompass the diversity of crop and weed species, as well as the complexity of the Australian grain production environment.

Background

Site-specific weed control (SSWC) involves the specific targeting of weeds with control treatments creating the potential to substantially reduce weed control inputs in low weed density situations. The availability of low-cost, durable processors and digital cameras, combined with increasingly accurate recognition technologies, has enabled highly accurate weed recognition capability for fallow and in-crop scenarios. Globally there is currently considerable research and development activities aimed at delivering SSWC across a range of production systems. Australian grain producers lead the world in the use of SSWC in fallow systems and their positive experiences have created the opportunity to fill a demand for the use of this approach for in-crop weed control.

Reflectance-based weed detection

In the 1980s and 1990s the development of technologies that allowed the detection of living plants led to the introduction of SSWC treatments for fallow weed control (Haggar et al. 1983; Felton 1990; Visser and Timmermans 1996). The weed detection systems used were based on a relatively simple process of using spectral filters and photodiode sensors to detect growing (green) plant tissue. As all living plants present in fallows are considered weeds, the reflection of near infrared light (NIR) by the chlorophyll in living plants enables the discrimination between these plants and the background soil or crop residues (Visser and Timmermans 1996). With the use of additional light sources, these weed detection systems can be used in a range of light conditions, including at night.

In Australia, reflectance-based weed sensing systems have been in use for over two decades in spot-spraying systems that are now widely adopted by Australian growers for fallow weed control (McCarthy et al. 2010). The application of herbicide in spot-spraying treatments can effectively control fallow weeds with substantially reduced amounts of herbicide. The substantial savings in weed control costs through the use of SSWC treatments has created opportunity to use more expensive herbicide treatments and non-chemical methods for the management of herbicide resistant weed problems (Walsh et al. 2020).

Camera-based weed detection

The expanded use of digital cameras and machine learning (ML) algorithms for image-based weed recognition in combination with smaller more powerful processors has enabled the development of field-scale and real-time SSWC for in-crop scenarios. The Raspberry Pi is an example of a low-cost single board computer that was developed as a teaching resource to promote computer science in schools. When coupled with a digital camera, the Raspberry Pi has many uses in simple computer vision related tasks, including fallow weed detection scenarios. SSWC systems for real-time use have been developed previously using Raspberry Pi computers for plant feature-based weed detection (Sujaritha et al. 2017; Tufail et al. 2021). Recent work has focussed on promoting to the Australian weed control community, the accessibility and availability of these technologies for construction of fallow weed detection. At present, although these camera-based weed detection systems are less-expensive, provide greater development opportunity and potentially more effective than current reflectance-based sensors, their use has been limited.

Development of machine learning (ML) based in-crop weed recognition for Australian grain production

Accurate recognition of commonly occurring weeds in Australian grain crops requires a highly sophisticated approach that can manage the complexities of crop-weed scenarios. The substantial benefits to using SSWC for fallow weed control has created a demand for the introduction of this approach for in-crop weed control across the cropping regions. The development of accurate weed recognition systems in horticultural crops is more easily achievable, with highly structured and predictable planting arrangements with slow travel speeds and consistent backgrounds. By contrast, the differences between crop and weed appearances are less pronounced in large-scale grain production systems, increasing the difficulty of developing reliable SSWC. Dense crop coverage in grain production systems exacerbates this challenge as large amounts of visual clutter makes it difficult to distinguish individual plants. Reflectance and simple image-based weed detection methods (e.g. colour thresholds and leaf edge detection) developed for fallow SSWC are not capable of dealing with this complexity. The substantial advance that a ML approach offers is the ability to reliably differentiate between weed and crop plants potentially to the point of identifying plant species. This opens a whole new application domain for in-crop SSWC. The use of digitally collected imagery has been identified as an approach that collects the type and quantity of data that allows for accurate discrimination between crop and weed plants (Thompson et al. 1991; M. Woebbecke et al. 1995). Imaging sensors, such as the standard digital camera, provide richer data streams with three channels (red, green and blue [RGB] images) of spatial and spectral intensity information. The richer data collected by these systems can be used for machine learning (ML) approaches that develop accurate weed recognition algorithms (Wang et al. 2019). With the promise of highly accurate (99%) in-crop weed recognition, there is now considerable research towards developing SSWC opportunities in cropping systems. These efforts are now resulting in commercial availability of detection systems for in-crop SSWC.

Recent examples of weed recognition algorithm development for Australian grain cropping

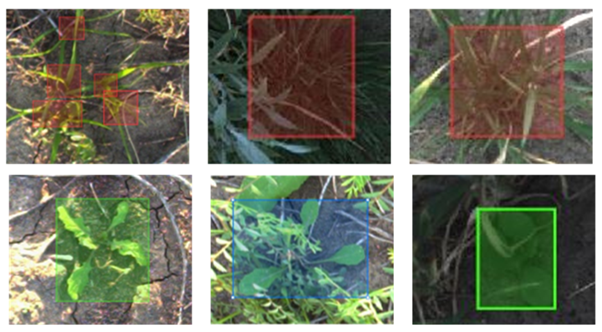

As part of a recently completed project ‘Machine Learning for weed recognition’, with GRDC investment weed recognition algorithms were developed for annual ryegrass (Lolium rigidum) and turnip weed (Rapistrum rugosum) plants present in wheat and chickpea crops. The weed recognition context evaluated was the early post-emergence stage where crop canopies are open, and weeds are readily visible in images collected from above. Using digital cameras mounted at a set height above the crop canopy, images of wheat and chickpea crops were collected in Narrabri and Cobbitty (NSW) during the winter growing seasons of 2019 and 2020. This image dataset was collected over two growing seasons and covers variable background and lighting conditions as well as different crop and weed growth stages. To prepare the image dataset so that it can be used to develop and train ML recognition algorithms, annual ryegrass and turnip weed plants in images were manually annotated with bounding boxes using ’Labelbox’ image annotation software (Figure 1).

Figure 1. Sample bounding box annotations. Top row (red boxes): annual ryegrass (Lolium rigidum). Bottom row (green boxes): turnip weed (Rapistrum rugosum).

Figure 1. Sample bounding box annotations. Top row (red boxes): annual ryegrass (Lolium rigidum). Bottom row (green boxes): turnip weed (Rapistrum rugosum).

A range of convolutional neural network (CNN) architectures are freely available to use in developing object recognition tasks. These architectures are being continually challenged and improved by the machine learning community. To evaluate weed recognition capability, two recently developed ML architectures, YOLOv5 (June 2020) and EfficientDet (June 2020) as well as the more 'classical' architecture, Faster R-CNN (2015) were trained on the annual ryegrass and turnip weed dataset to develop recognition algorithms. To determine whether the background (crop type) of the images had an impact on weed recognition, the 2000 image dataset was split into three scenarios. In scenario one, only images of weeds in wheat were used for training (~1300) and testing (~300). In scenario two, only images of weeds in chickpea were used for training (~200) and testing (~50). In scenario three, the datasets were combined - images of weeds in both wheat and chickpea were used for training (~1500) and testing (~350).

The precision for all classes (wheat, chickpeas, annual ryegrass, turnip weed and background) reaches up to 0.3 for the YOLOv5-S algorithm (Table 1). This is much lower than the standard of 0.5 achieved by this algorithm on urban image datasets, clearly indicating the difficulty of weed recognition in cropping systems. There was consistently higher accuracy in the recognition of turnip weed (~0.6) than annual ryegrass (~0.08) for all ML architectures across all three crop scenarios. Superior accuracy in recognition of the broadleaf weed (turnip weed) in comparison to the grass weed (annual ryegrass) is an indication of the respective challenges for these weed types. Broadleaf weeds have a very different and distinct phenotype when compared to a cereal grain crop. This makes identifying them a simpler task for both human experts and ML algorithms. Conversely, grass weeds can be nearly indistinguishable from the crop and even pose a difficult challenge for human experts when annotating the data. Recognition of turnip weed was substantially more accurate in wheat (0.6) than in chickpea (0.1) crops, potentially reflecting the influence on accuracy of differences in plant morphologies between the crop and weed species, but also that there was a smaller chickpea data set.

Table 1. Summary of precision results for YOLO v5 XL, YOLOv5 S, EfficientDet-D4 and Faster R-CNN ResNet-50 deep learning architectures. Each model was trained on three scenarios, weeds in wheat, weeds in chickpea and weeds in both wheat and chickpea. Cells coloured dark grey indicate best performance with progressively lighter grey shading highlighting reducing precision. White cells coloured red indicate poorest performance.

Context | Algorithm | Approx. parameters (M) | Rank | All | Annual ryegrass | Turnip weed | |||

|---|---|---|---|---|---|---|---|---|---|

Ryegrass and turnip weed in wheat | YOLOv5 XL | 87.7 | 2 | 0.28 | 0.079 | 0.640 | |||

Faster R-CNN ResNet-50 | 41.5 | 4 | 0.178 | 0.048 | 0.471 | ||||

EfficientDet-D4 | 19.5 | 5 | 0.184 | 0.024 | 0.506 | ||||

YOLOv5 S | 7.3 | 1 | 0.300 | 0.080 | 0.600 | ||||

Ryegrass and turnip weed in chickpea | YOLOv5 XL | 87.7 | 1 | 0.136 | 0.036 | 0.116 | |||

Faster R-CNN ResNet-50 | 41.5 | 4 | 0.058 | 0.010 | 0.034 | ||||

EfficientDet-D4 | 19.5 | 5 | 0.055 | 0.011 | 0.015 | ||||

YOLOv5 S | 7.3 | 2 | 0.130 | 0.050 | 0.084 | ||||

Ryegrass and turnip weed in wheat and chickpea | YOLOv5 XL | 87.7 | 2 | 0.288 | 0.069 | 0.577 | |||

Faster R-CNN ResNet-50 | 41.5 | 5 | 0.139 | 0.023 | 0.330 | ||||

EfficientDet-D4 | 19.5 | 4 | 0.169 | 0.020 | 0.437 | ||||

YOLOv5 S | 7.3 | 1 | 0.310 | 0.076 | 0.590 | ||||

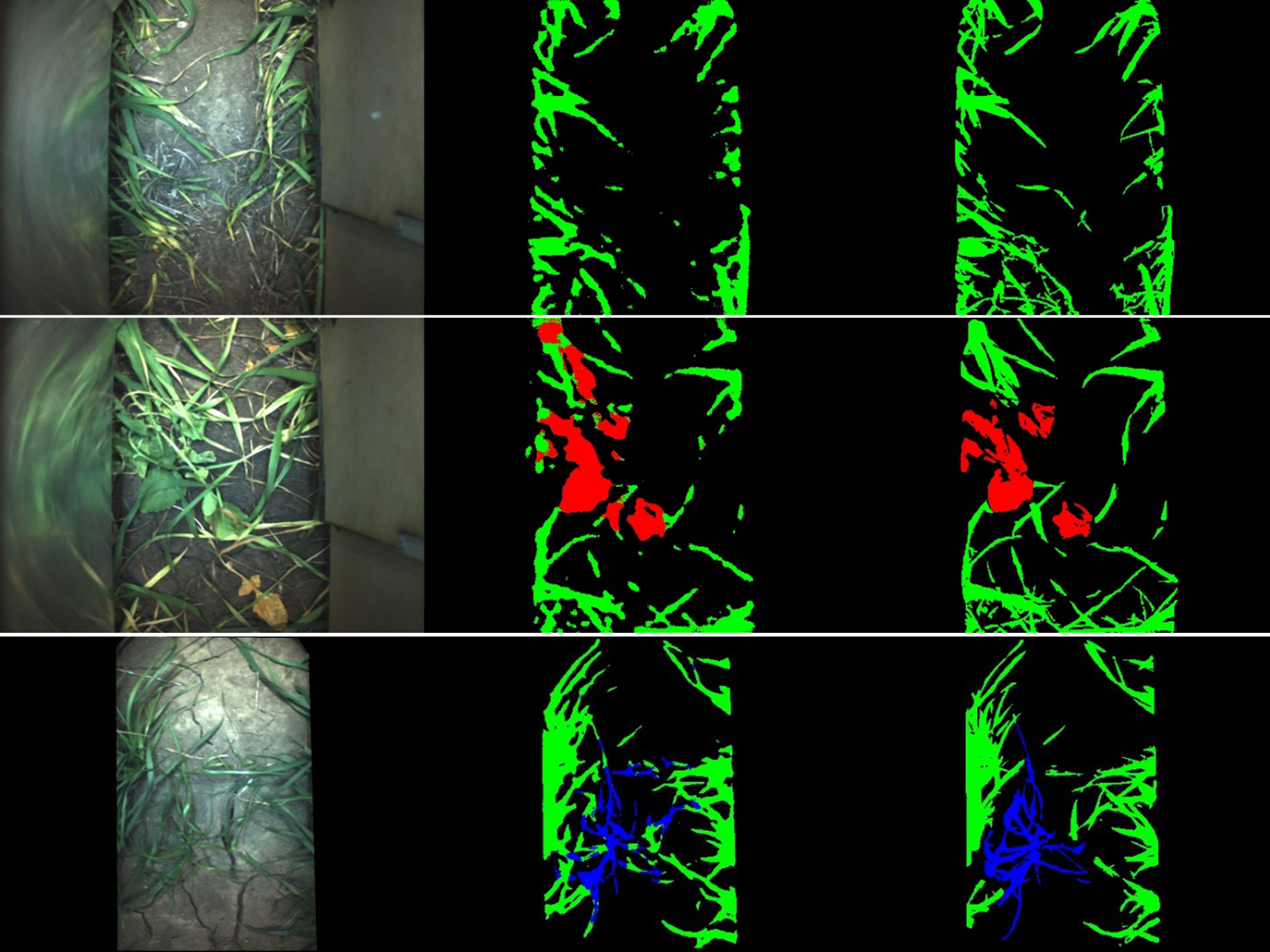

In a recently completed ‘Intelligent Robotic Non-Chemical Weeding project which was part of a GRDC Innovation Program, ML based weed recognition algorithms were developed for turnip weed and annual ryegrass plants present in wheat and chickpea crops during the late-post emergence stage. Weed images were collected by a camera contained within a shroud with a constant light source (Figure 2). The shroud allowed images to be collected of weeds present beneath the crop canopy in a consistent light environment.

The collected images were subsequently labelled using a labour-intensive, pixel-wise annotation process for a more precise algorithm that returns detections at the pixel-level rather than the previous bounding box level. The algorithms from this approach resulted in high levels of weed recognition precision for turnip weed 0.75 and annual ryegrass 0.65 in wheat (Figure 3). These substantially, higher levels of precision compared to the early post-emergence results are likely due to a combination of factors. These include the use of a more precise pixel-wise annotation technique compared to the bounding box approach, consistent lighting used in the collection of all weed images with these images taken in the same field. Essentially the more accurate weed recognition algorithm developed for the late post-emergence scenario was based on a more specific and precise weed image dataset.

Figure 2. Autonomous platform with suspended shroud containing a digital image collection system and a constant light source.

Figure 2. Autonomous platform with suspended shroud containing a digital image collection system and a constant light source.

Figure 3. Sample images of image segmentation. Each row is a different example. Images from the left to right columns are: the input RGB image, segmentation results from the ML algorithm, and pixelwise manually segmented ‘ground-truth’ training data. In the segmented images, green pixels are wheat, red pixels are broadleaf weed, and blue pixels are ryegrass weed.

Figure 3. Sample images of image segmentation. Each row is a different example. Images from the left to right columns are: the input RGB image, segmentation results from the ML algorithm, and pixelwise manually segmented ‘ground-truth’ training data. In the segmented images, green pixels are wheat, red pixels are broadleaf weed, and blue pixels are ryegrass weed.

Summary

The development of weed recognition technologies for SSWC is now focused on the use of ML approaches that will enable accurate detection and identification of weeds in fallows and crops. As well as high potential accuracy, the focus on this approach is being driven by recent ML developments and the low-cost and ready availability of suitable digital cameras and processors. Camera based systems that use algorithms for fallow weed detection have proven high levels of accuracy that are similar if not better than the current reflectance based sensing systems. Increasing interest in the development of in-crop SSWC has resulted in a focus on more sophisticated weed recognition systems for use in both crop and fallow situations. Future SSWC in Australian grain production will be driven by highly accurate ML developed weed recognition algorithms. At present though there is a need to define the weed image dataset requirements, image annotation processes and appropriate ML architectures that are required to enable this opportunity.

Acknowledgements

The research undertaken as part of this project is made possible by the significant contributions of growers through both trial cooperation and the support of the GRDC, the author would like to thank them for their continued support.

Felton, W (1990) Use of weed detection for fallow weed control. In 'Great Plains Conservation Tillage Symposium. Bismarck, ND.', August , 1990. pp. 241-244.

Haggar, RJ, Stent, CJ, Isaac, S (1983) A prototype hand-held patch sprayer for killing weeds, activated by spectral differences in crop/weed canopies. Journal of Agricultural Engineering Research 28, 349-358.

M. Woebbecke, DE, Meyer, G, Von Bargen, KA, Mortensen, D (1995) Color Indices for Weed Identification Under Various Soil, Residue, and Lighting Conditions. Transactions of the ASAE 38, 259-269.

McCarthy, CL, Hancock, NH, Raine, SR (2010) Applied machine vision of plants: a review with implications for field deployment in automated farming operations. Intelligent Service Robotics 3, 209-217.

Sujaritha, M, Annadurai, S, Satheeshkumar, J, Kowshik Sharan, S, Mahesh, L (2017) Weed detecting robot in sugarcane fields using fuzzy real time classifier. Computers and Electronics in Agriculture 134, 160-171.

Thompson, JF, Stafford, JV, Miller, PCH (1991) Potential for automatic weed detection and selective herbicide application. Crop Protection 10, 254-259.

Tufail, M, Iqbal, J, Tiwana, MI, Alam, MS, Khan, ZA, Khan, MT (2021) Identification of Tobacco Crop Based on Machine Learning for a Precision Agricultural Sprayer. IEEE Access 9, 23814-23825.

Visser, R, Timmermans, AJM (1996) WEED-IT; a new selective Weed Control System. Proceedings of SPIE Photonics East Conference, Boston, USA

Walsh, MJ, Squires, CC, Coleman, GRY, Widderick, MJ, McKiernan, AB, Chauhan, BS, Peressini, C, Guzzomi, AL (2020) Tillage based, site-specific weed control for conservation cropping systems. Weed Technology 1-7.

Wang, A, Zhang, W, Wei, X (2019) A review on weed detection using ground-based machine vision and image processing techniques. Computers and Electronics in Agriculture 158, 226-240.

Contact details

Michael Walsh

University of Sydney

Ph: 0448 847 272

Email: m.j.walsh@sydney.edu.au

GRDC Project Code: UOS2002-003RTX, UOS1806-002AWX,